This is the personal homepage of Grant Jun Otsuki. I’m an associate professor in cultural anthropology at the University of Tokyo. About me.

How to approach a research proposal (in Japanese)

Masaharu Kawano at Tokyo Metropolitan University has translated and published my posts on formulating research questions and the literature review into Japanese. You can find links to these here.

An Overdue Update (2025)

It’s important to show occasional signs of life on a personal blog. It’s been nearly two years since my last post, and a lot has happened since then, so here is an update on recent activities.

In April 2024, I left my position at Victoria University of Wellington to join the Department of Cultural Anthropology at the University of Tokyo. I was fortunate enough to receive the “Excellent Young Researcher” award, which provides generous support for my work for my first five years. Related links are on the About Me page.

The move from New Zealand to Japan ate up a considerable chunk of my time last year, but I’ve been continuing at a slow but steady pace on research of digital platforms in the university (with Lorena Gibson, which we’ve presented at recent ASAANZ and 4S conferences), human-machine interface engineering, the history of cybernetics, and a few other things. Last year was a wash for publications and presentations, but 2026 will be a recovery.

My colleagues and I in the editorial collective of the Engaging Science, Technology, and Society journal were awarded the Society for Social Studies of Science Infrastructure award at the 2024 meeting in Amsterdam.

I was elected to 4S Council for a three-year term, where I’ll work with the incoming president Wen-Hua Kuo and a group of excellent colleagues to serve the STS community. I was also invited to be a member of the Editorial Board of Science, Technology and Human Values.

On the blog side, visitors seem to be coming to read my posts on how to formulate questions for a research proposal, and how to think about literature reviews. These are being translated for publication in Japanese early next year, and I have a third post in draft on writing about methods which I hope will be done some day.

Reconstructing the Anthro Blogosphere with RSS

Updated (2023/12/27): I’ve updated the OPML list of subscriptions with some additional entries from this post on Anthrodendum.

To start, let me pour one out for Anthrodendum, the anthropology blog that may not have started it all, but which was for many the one pre-social media hub for anthropology online. When I was a graduate student, it was invaluable for me as a resource for keeping in tune with the conversations taking place in American anthropology, and played no small part in my decision to do a PhD in anthropology. It also inspired the work that a scrappy group of then graduate students did to imagine what the Society for Cultural Anthropology could do online. Thanks to all involved.

With the end of Anthrodendum, and the dissolution of the once vibrant community on Twitter, it’s becoming hard to keep track of what’s going on with anthropology online. At the moment, most of my anthropology news comes via the remainders of Twitter, Bluesky, and a little bit of Mastodon. But my most useful source of information continues to be Facebook, where most of my friend list is anthropology-related.

Facebook is great for this, but as the recent Twitter debacle has reiterated, it’s not good to rely on one platform. It’s not a very shareable solution either.

Blogs were and continue to be a reasonable solution to this situation. They can be easily linked to each other, moved to new servers and software if necessary, and can give the blogger control over things in a way that Facebook would never allow.

Where Facebook and other large social networking services excel, however, is in discoverability and usability. They make it extremely easy to find new things that may be of possible interest to you and they do it with relatively clear and uncluttered interfaces. When the choice is between Facebook and a massive list of browser bookmarks that you have to manually wade through, there really is no choice.

However, there is a pre-social network technology that can help with this: RSS.

(Another major obstacle is posting content. It’s not as easy to start and maintain a blog as it is to just post on Facebook or Twitter, but it’s not impossible or expensive.)

RSS

RSS stands for “Really Simple Syndication.” It’s a now decades-old system based on the XML file format, that websites can use to provide an easy way for people to subscribe to updates. Basically, an RSS file (or sometimes a similar “Atom” file) is a list of posts that is updated whenever a new item goes up on a blog. This was an extremely common way for people to follow blogs in the pre-social network era. (RSS is also the foundation of podcasts.) You’d just collect a list of URLs to RSS files at different blogs, and you’d have a piece of software that would periodically download the newest lists of posts and present them to you in an email-like interface. It is still built in to most major blogging platforms.

The piece of software that you use to subscribe to and manage RSS feeds is called a newsreader. There are web-based ones (once upon a time, the best was Google Reader, which died an untimely death); Feedly is a popular one, though it appears to have gone through an AI-inspired change.

By far, however, the best readers are apps that you download on to your own device.

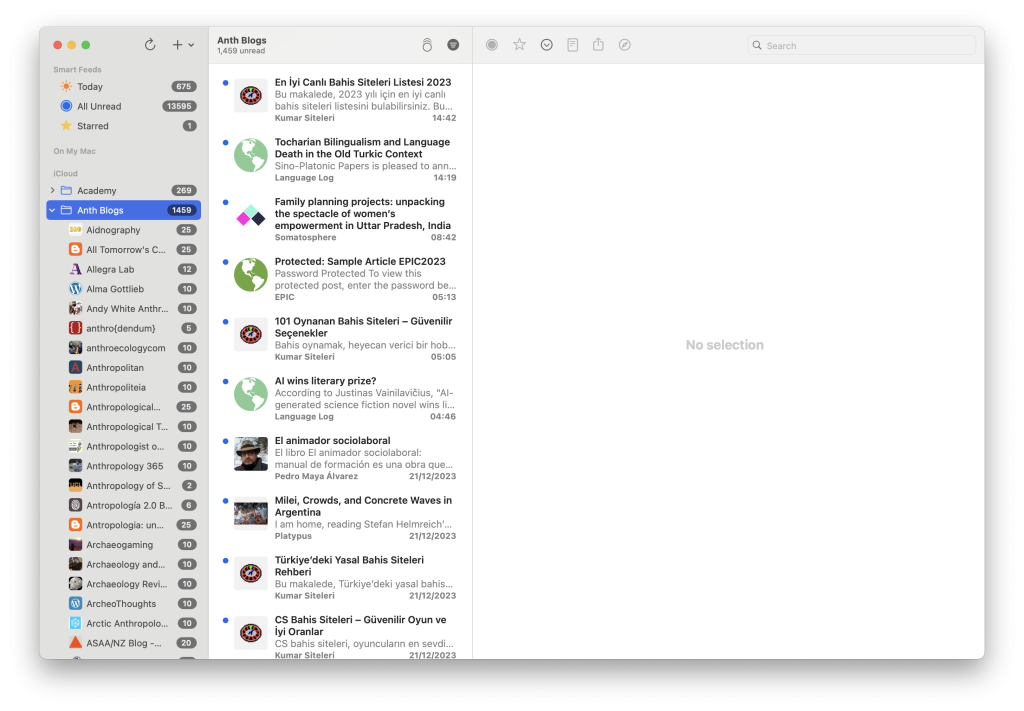

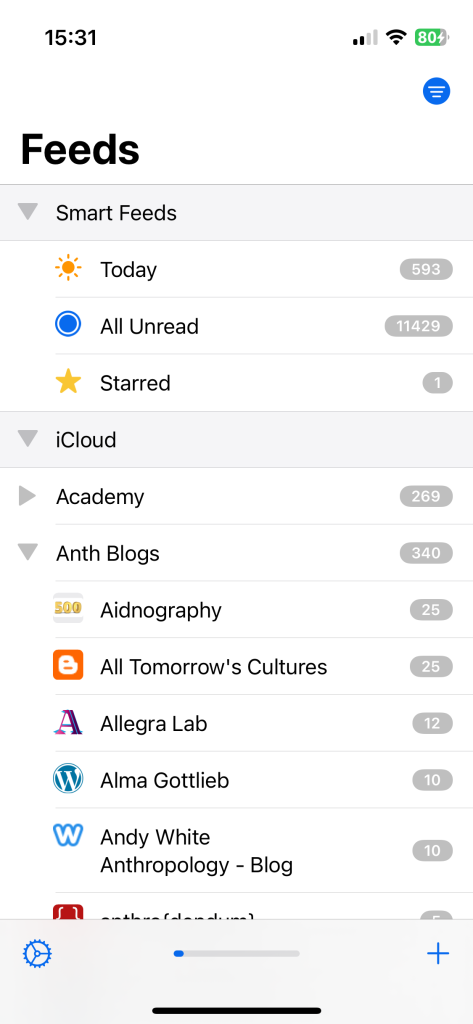

I use a very good free one called NetNewsWire, which is available for macOS and iOS devices. It has a long history, and is solid. It will sync your feeds via iCloud between your computer and your phone, and has a quick and pleasant interface for browsing the news.

You can download NetNewsWire for the Mac and find a link to the App Store for the iOS version here.

When you click or tap on a site’s name, you’ll be presented with a list of recent items posted on that site, and from there you can click through to open a browser window which will take you directly to the item on that site. In many cases, you’ll be able to read some or all of the item text without going to a browser at all.

As you’ll be able to see from the screenshots, you can arrange feeds from different websites into folders, browse items from sites individually or collectively in folders, and star items to return to later.

Finding RSS Feeds to Subscribe to

Discovering news feeds to subscribe can be very easy, or impossible, depending on the site in question. (NetNewsWire comes with a curated selection of sites pre-subscribed, but it’s very heavy on tech news.)

Most sites, especially large commercial or custom-built ones, do not provide RSS feeds at all. They’d rather you subscribe to them, or follow them on Facebook or Twitter, partly because it’s much easier for them to track your usage this way.

However, many will have RSS feeds, even if they are hidden away.

The easiest way to subscribe to a blog is to look for the RSS icon or a link mentioning RSS or Atom somewhere on the page. In most cases, you should right-click or control-click that icon or link and copy the link address. Then you can go to NetNewsWire and click/tap “Add Feed.” The link you just copied should already appear there, so if you tap Add then that site will join your list of subscriptions, and NetNewsWire will download a list of recent items.

In many cases, a site will have a feed even if it does not advertise an RSS feed at all. Many blogs run on a piece of software called WordPress, which has RSS built in and usually turned on. If you see a website you’d like to subscribe to but don’t see an RSS, try taking the sites URL and adding “/feed” to the end and adding that to NetNewsWire. (e.g. To subscribe to “https://anthroperson.wordpress.com/” add “https://anthroperson.wordpress.com/feed”)

One nice thing about most RSS readers is that you can export your list of subscriptions, and share them with other people, who will be able to import that list into their own readers. This is usually done using an “OPML” file.

For your convenience, I’ve taken all of the blogs on this list from 2021, removed any that have closed or been inactive since 2020, and put them in this OPML file. Right-click or control-click on that link, and download the linked file. Then go to NetNewsWire and choose “Import Subscriptions” and select the file downloaded (“Subscriptions-anthropology.opml.”) You’ll then be subscribed to some 90+ anthropology blogs. (I’ve added the subscriptions to my site and my colleague Lorena Gibson’s for good measure.)

The list is quite a different mix of things than I get on the major social networks, but perhaps with time, and growing collective investment in blogging, RSS will become better than any of them.

So go download NetNewsWire and import the subscriptions, let them be your portal to anthropology online.

DUE TO FALLING ENROLLMENTS, WE WILL NO LONGER OFFER COURSES IN ROMULAN AT STARFLEET ACADEMY

Inspired by things linked in these recent posts, I wrote something for McSweeney’s.

Plato’s cave regrets to inform you it will be raising its rent

As the costs of maintaining a cave meant to trap you in your ignorance increases year after year, we want you to know, from the bottom of our hearts, that we, too, are suffering. We get that times are tough, and we hope you can extend that sympathy to us, the managers of your cave.